Object Manipulation

Object Manipulation Video Comming Soon

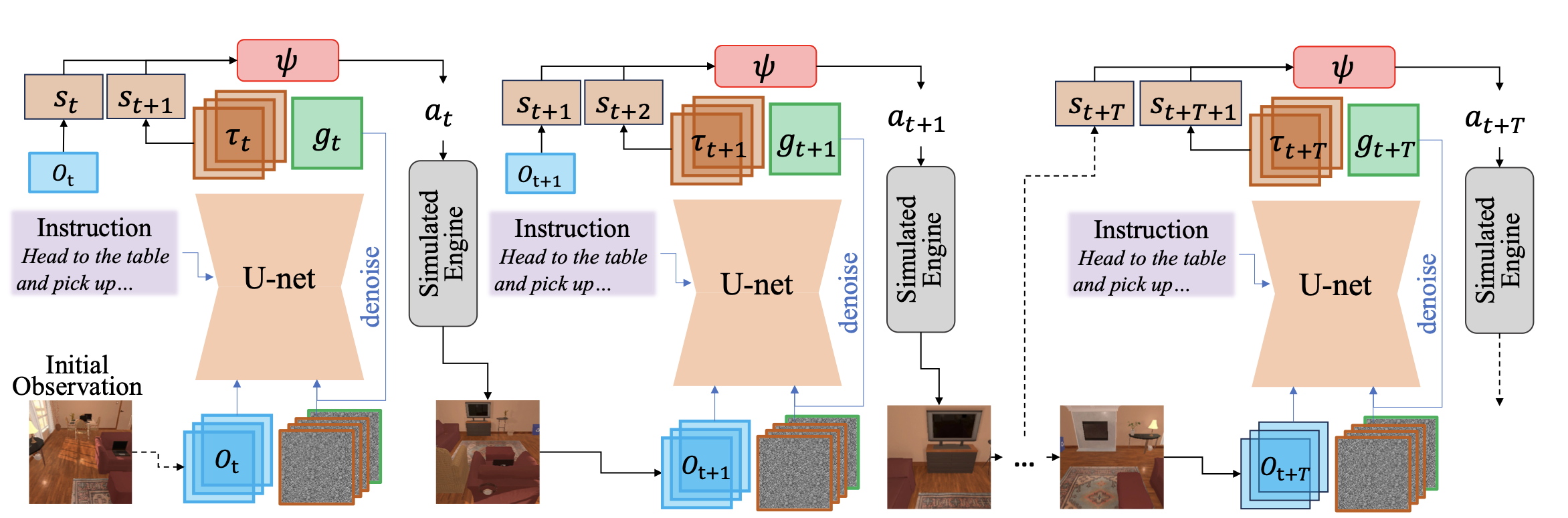

Task planning for embodied AI has been one of the most challenging problems where the community does not meet a consensus in terms of formulation. In this paper, we aim to tackle this problem with a unified framework consisting of an end-to-end trainable method and a planning algorithm. Particularly, we propose a task-agnostic method named 'planning as in-painting'. In this method, we use a Denoising Diffusion Model (DDM) for plan generation, conditioned on both language instructions and perceptual inputs under partially observable environments. Partial observation often leads to the model hallucinating the planning. Therefore, our diffusion-based method jointly models both state trajectory and goal estimation to improve the reliability of the generated plan, given the limited available information at each step. To better leverage newly discovered information along the plan execution for a higher success rate, we propose an on-the-fly planning algorithm to collaborate with the diffusion-based planner. The proposed framework achieves promising performances in various embodied AI tasks, including vision-language navigation, object manipulation, and task planning in a photorealistic virtual environment.

The figure above illustrates the proposed framework, integrating the diffusion-based planner into the on-the-fly algorithm.

Object Manipulation Video Comming Soon

Vision-language navigation Video Comming Soon

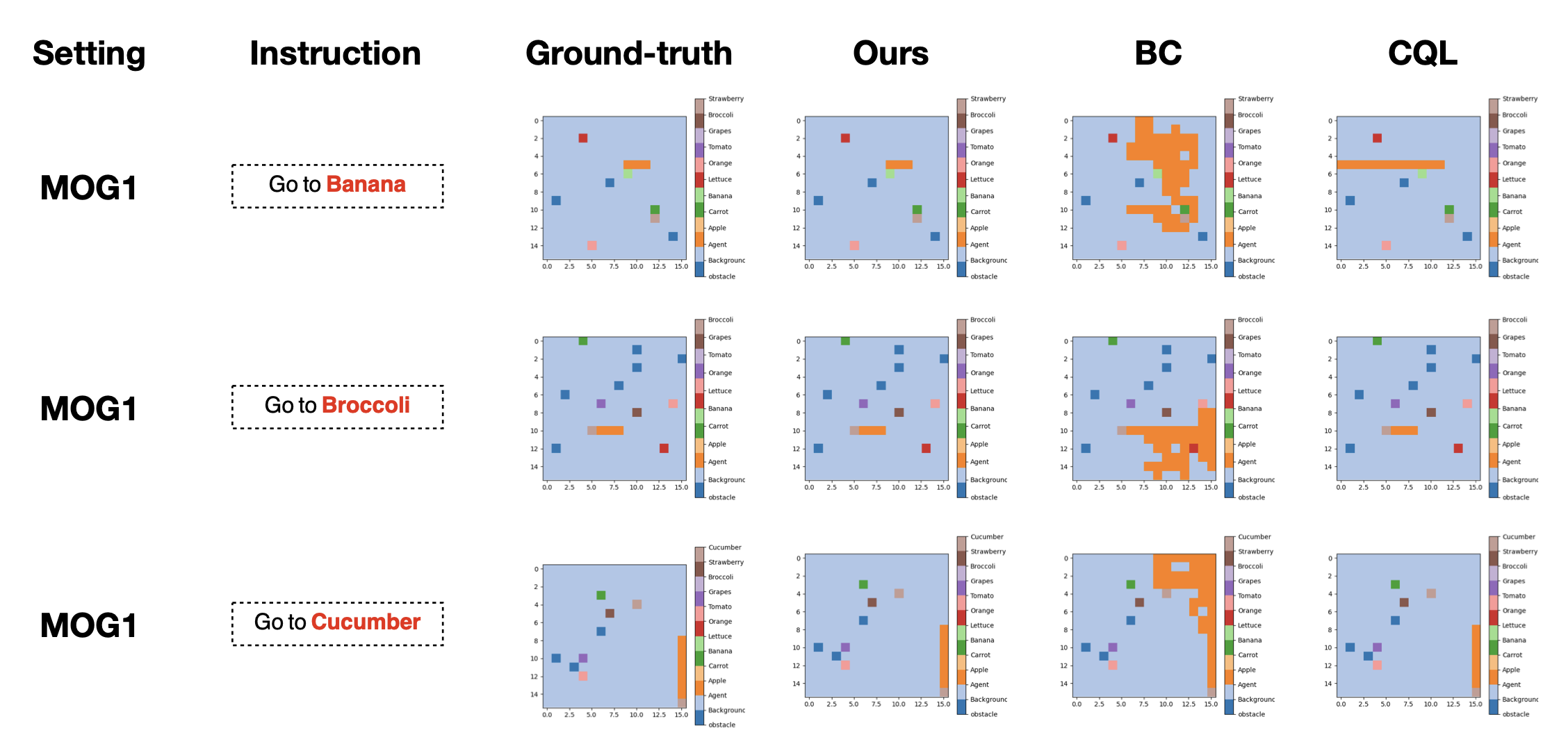

Comparative Analysis: Our model demonstrates a high fidelity in replicating the ground-truth paths against baseline models such as BC and CQL.

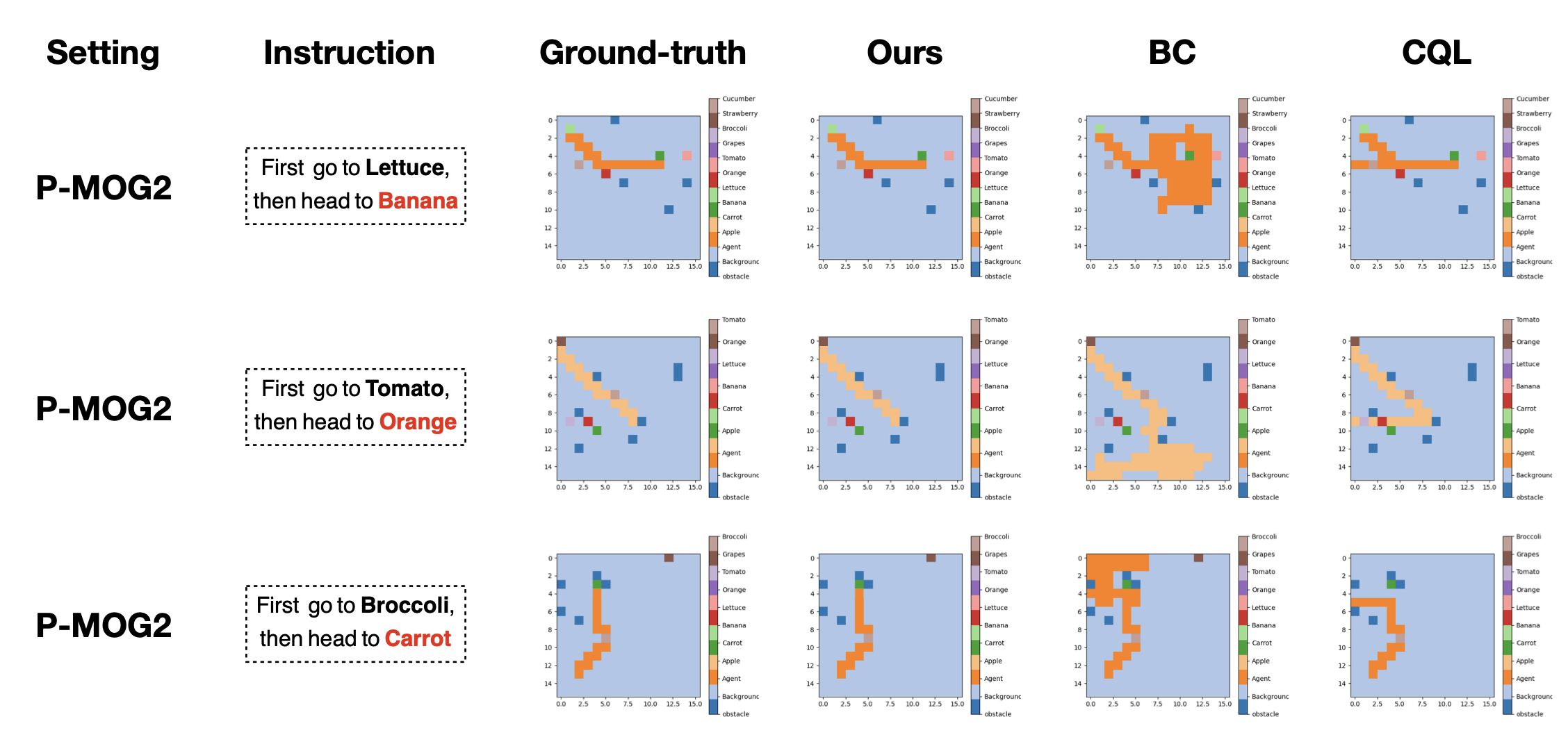

Comparative Analysis: Our model successfully navigates first to a visible reference object and then to an initially unseen target object, outperforming baseline models like BC and CQL that often take indirect routes or fail to reach the target.

Template from Nerfies